Stacks network users can look forward to increased compute bandwidth and faster transaction processing in the coming weeks and months. Historically the Stacks blockchain has artificially constrained block output. Thanks to the contributions of the Hiro team and others across the Stacks ecosystem, the upcoming launch of SIP-012 will provide improved Clarity cost function implementation and MARF data store, resulting in more productive block output.

Beyond SIP-012, Hiro engineers are working on fee estimation insights that can provide better visibility to miners and users alike on essential transaction fee information as the Stacks fee market grows.

Aaron has a wealth of knowledge on Stacks and Clarity, and I hope you enjoy this episode about Aaron’s introduction to the Stacks ecosystem, what he and the Hiro team work on, and the developments toward a 15x better transaction throughput and processing times on the Stacks blockchain.

— Gina Abrams:

Hello, and welcome to the builder series of Stacker Chats, where we connect with the amazing folks building in the Stacks ecosystem. I'm Gina Abrams and today I'm joined by special guest Aaron Blankstein, Hiro team member and senior contributor in the Stacks ecosystem. Aaron's work has been instrumental in development of the Stacks blockchain, the Clarity smart contract language, and his code is in many repositories for Stacks. Aaron, thank you so much for joining!

— Aaron Blankstein:

Thank you, Gina. I'm happy to be here.

— GA:

Cool. I'm super excited to have you on today because there is so much work happening to scale Stacks through your work and the Hiro team's work. And we're going to dive a little bit deeper into that, but to start, I'm curious about what made you decide to join the Stacks project, this was almost five years ago, above all the other opportunities that you had as a Princeton PhD in computer science?

— AB:

Yeah, sure. When I was wrapping up my PhD program, a lot of the work that I had done during my PhD had focused on computer systems, like the way web applications are deployed today, and work around user privacy on the internet, and how we can build more privacy focused applications for users. So a lot of my research had covered areas of how Web 2.0 had gone wrong, like all these applications that people had built, that just ingest tons of user data. And so I'd always kind of been interested in, I guess, alternative models for how the internet could work or how web applications could work. At around this time I was contacted by Muneeb and he said, "I have this really great, really far out idea, and I'd love to talk to you about it." And over the course of like a hour long conversation, he pitched what at that point was a very pie in the sky idea about a new kind of internet where users are in total ownership of their data.

Users would have more of a stake in the app applications that are getting built, the applications that they're using, and powering all of this was the Stacks blockchain. I don't think that it was at this point named, it was just like the idea is that this would be powered by some blockchain and the pitch was, "Hey, come and build this." And I thought to myself this really resonates with a lot of the problems that I've seen on the web as it was then, and still is today. And I thought this sounds like a great project to join. It'll be really exciting and maybe we'll build something amazing. I'm glad I made that decision.

— GA:

What a journey being able to see it from inception all the way to now, when there are lots of applications and production, et cetera. So, can you share a little bit more background on some of the responsibilities you've had fulfilling this vision [for a better internet] through the Stacks blockchain and Clarity?

— AB:

Yeah, sure. So over time we started to flesh out what the requirements would be from Stacks 2.0, essentially. In the earliest days of the Stacks blockchain and what was previously the Blockstack Project, the blockchain that we had really only supported certain kinds of things like the BNS (the Blockchain Name Service), and as we wanted to expand that functionality and flesh out what we wanted out of the Stacks blockchain, we came up with the fundamental designs. And as part of that, we knew that we would need a smart contracting link to enable developers to build the rich applications that they want to build without us predetermining the style of all applications that could be built on blockchain.

Once we knew we wanted a smart contract language, the question was okay, what is the design of this going to look like, and how is it going to be implemented? Over time, we hashed out the design of Clarity and I was pretty instrumental in the design of Clarity in particular ensuring that the Clarity language wasn't Turing complete, such that it could be analyzed through static analysis to tell you things like whether or not a smart contract function will ever complete, approximately how much it would cost to execute all sorts of things like that. The Clarity type system, I was pretty instrumental in the design of that. And then also the initial implementation work of Clarity for Stacks 2.0 as well as our proof of transfer algorithm.

— GA:

Thank you. And what team do you work on currently at Hiro? And can you also elaborate on the work that's done by the Hiro team versus the Stacks Foundation, or other independent entities?

— AB:

Yeah, sure. So on the Hiro team, I'm a member of our Stacks platform team, which maybe you'll hear called the SPLAT team. I was instrumental in that abbreviation, so point there. As part of the platform team, I help the Hiro engineers ensure that the developer platform that we have —so this encompasses things like the API that powers the Explorer, as well as developer tools like Stacks.js or Clarinet —I help ensure that those things capture the goings of the blockchain work. And then I also work a lot on proposing changes, and implementing some of these proposed changes in the Stacks blockchain repo, as from the perspective of a Hiro contributor.

Hiro as a company has views about what features the Stacks blockchain should have. And as part of those opinions we submit proposals, those proposals are reviewed by other participants in the ecosystem, like maybe Stacks Foundation, miners, other people like that. And as those are reviewed, we can work on an implementation. So, while Hiro doesn't have full maintenance responsibility of the Stacks blockchain repository, we are contributors to it.

— GA:

Awesome. Thank you. Today in this episode, we're going to focus a lot on scalability and ongoing developments in this realm. You bring this unique perspective of the Stacks blockchain, and how it was developed well before Stacks 2.0, et cetera. Can you provide some high-level context to set the stage on scalability in terms of the recent traction that we're seeing and some of the associated challenges, and growing pains for the network?

— AB:

Yeah, sure. So there's kind of two important pieces of background when thinking about scalability in the Stacks chain and maybe some of the growing pains that we're seeing today. One of these is that the Stacks blockchain is not unique in this design choice, but it is different from the majority of chains that we see today, which is that the Stacks blockchain is an open membership blockchain, meaning anyone can choose to become a miner. You do not need to have some certain value of Stacks to participate in the consensus algorithm. You don't need to register in a BFT protocol. You can just start mining. So, it's open membership. And then two, much like Bitcoin, the Stacks blockchain views decentralization in a pretty Maximalist way. And one of these Maximalist views of decentralization is that not only should anyone be able to become a miner, but the compute resources required to follow the blockchain, should remain relatively modest.

And what we mean by relatively modest is, if you have an off the shelf consumer available computer and a relatively good network connection -- like where you're getting over 10 megabits per second on a regular basis, you should be able to keep up with the blockchain.

"[...]The Stacks blockchain is not unique in this design choice, but it is different from the majority of chains that we see today, which is that the Stacks blockchain is an open membership blockchain, meaning anyone can choose to become a miner."

Now, while these requirements might seem kind of high, with a 10 megabit connection, and relatively recent computer, if you compare it to a lot of other blockchains, they make this assumption that if you are running a node in the network, you're going to be running it in a powerful data center, like either in the cloud, or even stronger data center computer. So, because of these two design choices, there's a couple of impacts. One is that all blocks should be processable on one of these modest machines with a modest connection on the order of like a minute, maybe a little bit faster than that.

The second thing that falls out of this is that blocks should be relatively small. So, in Bitcoin's case, blocks are around two megabytes, and that's what we chose for stats as well as we have the same desired bandwidth requirements. Because of those two things, there's a limit on the number of transactions that can be included in a block. A block can be at most a minute and it can be, at most, two megabytes of data. Okay, so that's one constraint.

The second is that when Stacks 2.0 launched, we had very precise measurements of how the Stacks blockchain performed some of its database operations, but not all of the Clarity on time operations. And so at the time of launch, we took a very pessimistic view of the maximum run time of various Clarity instructions. And we said in the worst case, what this will mean is that blocks will be a little bit faster to process than we expected. Maybe blocks will be 10 seconds to process rather than a minute.

And later on, we'll be able to enable changes either through a cost voting process or a SIP proposed fork to correct those changes. But right then we were just trying to avoid the possibility that we could have like 10 minute blocks, which would prevent people from making forward progress as miners.

So those are the two big design decisions that went into Stacks 2.0, that put us in the position we're in today where there's a lot of traction on the Stacks network, and the block limits are pretty small or pretty conservative. And so we see a backlog of transactions.

— GA:

Thank you. And it sounds like there's a fair amount of opportunity to improve things. So, you've worked closely on a network upgrade proposal called SIP-012, and I'd love to dive deeper in that. There was a forum post that was recently published on the topic. But again, this is a cost only network upgrade. Can you outline some of the key components that are included in the SIP and what those improvements are?

— AB:

Yeah, sure. So, the SIP covers basically two topics. The first topic it covers is how the SIP itself would get activated. How do you measure support for the SIP? How would the SIP once it's implemented be rolled out and how would participants know? How would participants signal and all that kind of stuff? Then the second part of the SIP is really about what the actual changes in the network would be and is where all of the cost changes are.

The cost changes are broken down into a couple different categories, but at a high level, there's two different proposed changes. One of the proposed set of changes is saying, "Okay, we have the Stacks blockchain and a Clarity run time that we have today, but we are assessing the cost of operations in a really, really conservative way." So the question that we were asking is, "Okay, what if we were trying to lower the bounds on that cost assessment and make the cost assessment more closely match what we actually see in the performance of the implementation we have today."

There was a lot of benchmarking work that went into this. There's a project called Clarity benchmarking on GitHub. You can see all of the benchmarks we ran. Basically what these benchmarks are, is you take a bunch of Clarity operations, you measure them on different devices, and you see how long they actually take to process. And then based on that, you adjust how the Clarity virtual machine in the Stacks blockchain counts costs for those operations. So, that's one class of changes.

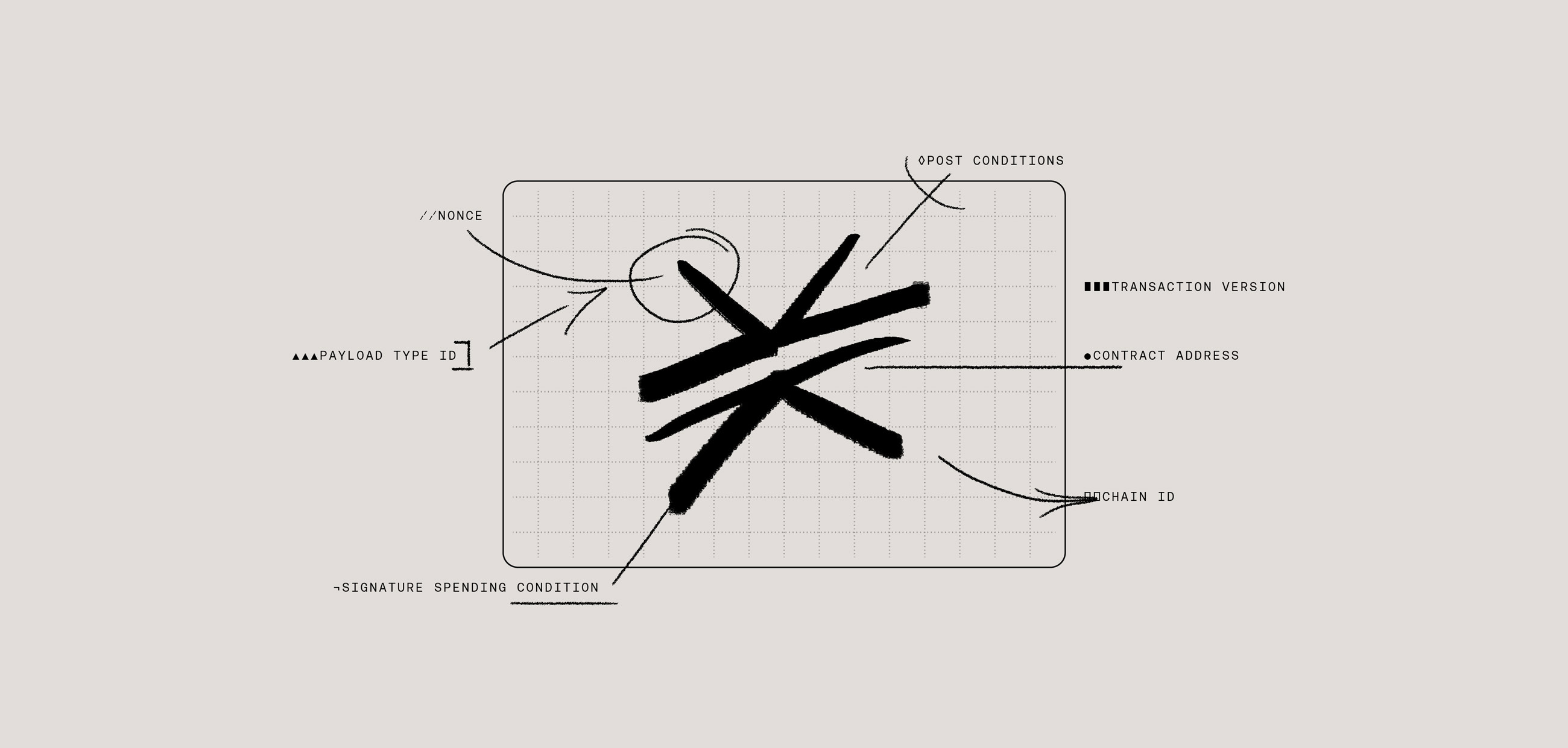

The other class of changes is we looked at the underlying data store used by Clarity smart contracts in the Stacks blockchain, and other parts of the Stacks blockchain. But primarily the smart contracting language uses a data store called a MARF which has a SIP that talks about the design of that data store and how it works.

So we looked at the implementation of that and we said, "Are there any quick wins here where we could speed up the performance of this MARF?” And with that speed up, increase that aspect of the block limit, too. So this is different from the other work, because it's not only measuring what we have today, but it's also trying to improve on it. So, what we found is that with a relatively simple change in the implementation of the MARF we could see like three to 4X speed up in those operations, sometimes even higher. Based on that, we proposed an increase in the MARF dimensions of the block limit.

— GA:

Thank you. And yeah, on that topic of the order of magnitude difference that we might expect with this upgrade, what might we look forward to once it goes live?

— AB:

Yeah, so the answer depends a little bit on the smart contract in question. So for a smart contract, let's say the proof of transfer smart contract, the smart contract was already basically limited by its MARF operations. The number of database operations each contract call was doing, that was what was placing the limit on its methods. And this is almost like a worst case contract for speed up. And in this case you would see a 2X speed up because we're allowing two times more MARF operations proposing two times more MARF operations per plot. So there, you would see a 2X improvement.

Contracts like BNS, a lot of NFT contracts, a lot of these contracts use data store operations, where they have a big list of things that they store. And then they retrieve the list out of the data store. But the list that they're storing is actually smaller than the maximum list that they could possibly store.

The way that we assess costs is by changing the impact of that. We used to assess costs based on the maximum possible list that could have been stored. And now we assess costs more closely to the list that's actually being stored. Those kinds of contracts could see much larger speedups, like easily 3X, 4X, but many of the ones that have really bad or mismatched cost assessments could see like 10X or 15X or higher speed up. So it'll depend on the contracts, but we are going to have like a build of Clarinet that allows you to compare like if SIP-012 was implemented, what would be the number of transactions in your smart contract that could fit in a block, and you'd be able to see the speed up between the two of them.

"Some kinds of contracts could see much larger speedups, like easily 3X, 4X, but many of the ones that have really bad or mismatched cost assessments could see like 10X or 15X or higher speed up."

— GA:

Awesome. Thank you. What might your advice might be for developers who are building on Stacks, both who have contracts live today, what they might expect through this change? And then also folks who are looking to launch in the next couple of months, what recommendations might you have?

— AB:

In general, my recommendations to smart contract developers are just test, test, test. You should be absolutely testing your smart contracts, so much more than you think you need to be testing them. Clarinet’s a wonderful tool to do that. It'll also tell you what the cost of your operations is. You'll be able to see, "Okay, this defined public method in my smart contract", like if a Stacks block was just full of this defined public method, how many such transactions would fit in the block? You could see a number of like 900 and you could say, "Okay, that's like a pretty good number." Or you could see a number like 80 and you might start to worry that your transactions would turn out to be too expensive, or you might not get picked up very quickly. And you can compare these between SIP-012 and today, and see like, "Well, if SIP-012 rolls out, does this solve my cost problems?" And if it does, then maybe you wait for SIP-012 or you participate in getting SIP-012 activated, things like that.

"In general, my recommendations to smart contract developers are just test, test, test."

— GA:

Excellent. What are the next steps? I know that there's a bit of a tentative timeline, if you could share that. And then also any things we might look out for as this upgrade is potentially implemented that might impact, if anything.

— AB:

Yeah. So there's a couple of things happening. You can follow along in the implementation work stream. On the Stacks blockchain repository, there's a set of issues tagged next-costs. And there's also a public project board for, I think it's labeled Stacks 2.05, but that encompasses the SIP-012 changes if they activate. So, you can follow along there, comment on the goings on, and you can see the progress of that work there. Second thing you can do is there's a pull request on the Stacks governance repo for SIPs. That's the SIP-012 poll request. There's a lot of comments on there. You can read it, make your own comments, participate in that discussion and also follow along in the activation signaling process. So, SIP-012 talks a lot about how Stackers and other community members can be involved in the voting process for SIP-012 getting approved.

So, you can look there, get involved with that and follow along the progress, or if you are a Stacker you can participate in the voting once the tooling for that is live.

The final part of your question was estimated timelines. I think that it's still a little early to give a great timeline. Based on the discussions in the Stacks core devs discord channel and the public blockchain meetings, the idea is that we're not going to propose a block height, which would be like the time of the activation until at least half of the issues in the 2.05 project board have been reviewed, and closed, because that'll give us a much better sense of how to estimate launch time. But right now the target is the end of November or around there.

— GA:

All right. Awesome. Will any specific action be required for folks that are building apps through the change? Say, it's implemented, should people be looking out for something in particular? I understand there also might be some action that miners need to take to upgrade their software, but yeah, anything that the users might need to keep in mind?

— AB:

The people that will have to take action, or choose to not take action are basically anyone who's running a Stacks blockchain node. So if it's part of your application, you're running Stacks blockchain node, and service saying API requests, you would need to upgrade that node in order to follow this 2.05 fork. If you don't want to follow the 2.05 fork, don't upgrade your node. And you'll be in Stacks 2.0. Miners, same thing. All of them are running the Stacks blockchain node in order to actively mine, they would need to upgrade. So, yeah, but if you're just a smart contract developer, you launch your smart contract, you're not running a node yourself, then there's nothing you need to do.

Also nothing about the Clarity language itself is changing. So your smart contract today could be written exactly the same as your smart contract tomorrow. It just might have different costs.

— GA:

Thank you. Switching gears a little bit, another part of ongoing work on scalability is better fee estimation toward a more robust fee market on Stacks, and the corresponding implementation in the Hiro wallet. I'd love to hear more about this.

— AB:

Yeah, sure. So this work stream, unlike the SIP-012 work stream, doesn't change anything inherent about the network. It doesn't change the consensus rules of the network. It just adds API endpoint and suggests changes to miner behavior that improve how blocks are assembled throughout the network. To understand these changes, I think you need to first start with how miners evaluate transactions today. What's going on in the latest release version of the Stacks blockchain is that a naive miner will receive transactions from users from the network people propagate transactions around. And these transactions won't be included in a block. And so when a miner is assembling the block, the next block that they're trying to mine, they look at all these transactions that haven't been mined yet. And they come up with some way of ordering those transactions and then they try to include them in a block.

Once they reach the block limit, they say, "This is my block", announce it. And the block gets mined. What happens today in this ordering is basically the miner does two things. It first sorts based all of the transactions by the total fee paid by the transaction. And then it figures out based on the total fee paid by the transaction, what the total fees would be from like a given Stacks account, if all their transactions were included, and then it tries to start including transactions that way. So, there's a couple weird behaviors right now in like the default miner that have to do with does this account have like a high fee transaction that was included before, or might get included later such that the ordering isn't exactly higher fee transactions get included more quickly, but for the most part, the way it works today is transactions are just considered by miners based on the raw fee that they pay.

This works okay. But the problem is that not all transactions are the same, right? My transaction, for which I pay five Stacks, might be a hundred times less expensive for the miner to include in a block than someone else's transaction that pays 20 Stacks. But the miner will include the 20 Stacks one, because they're just paying more than my transaction, even though in some sense, it's paying more for the portion of the block that it's consuming.

In order to fix that situation, we have a set of changes called fee estimation. In fee estimation, basically what every node in the network is doing is, they're seeing transactions get processed, they come up with estimates for what portion of the block limit any given transaction is going to consume.

This way, when the miner is looking at the mempool, they can see not only the raw fee paid by the transaction, but also the portion of the block limit that they think this transaction is going to consume.

Based on those two values, they can determine an estimated fee rate so that they could see the transaction that I had that was five Stacks and only occupied one 100th of the block is actually a much more cost effective transaction to include than this other one that maybe pays a high fee, but is a hundred times more expensive.

That's the broad set of changes. It's mostly focused on miner behavior, but in addition to that, we add endpoints that allow users to obtain these estimates, such that when their wallet is about to broadcast a transaction and decide what fee to pay it can get this estimate so that it knows like this is going to be an expensive transaction. I need to increase the fee, or this is a cheap transaction, and I can reduce the fee, things like that.

"This way, when the miner is looking at the mempool, they can see not only the raw fee paid by the transaction, but also the portion of the block limit that they think this transaction is going to consume."

— GA:

Very cool. Thank you. And I am just curious about how people might think long term about this fee market, and just given what we've seen in other networks where things can almost become a little bit inhibitive for transactions. Anything that you would say about the fee market that's specific to Stacks?

— AB:

So I guess there's a couple of ways in which the fee market for Stacks will be slightly different than the fee market for other blockchains, but mostly it'll be similar to other blockchains just because you have a block limit, everyone's competing to get into the block and they compete with their fee rates. During periods of high congestion in the network, you're going to see high fees. That's the way life is in a blockchain. The big difference between Clarity and a lot of other blockchains that support smart contracts is the way that the fee is assigned for transactions. So, in Clarity or in the Stacks blockchain, each transaction just pays like a single fee amount. Like your transaction says, "I'm paying 10 Stacks." That is always the amount that your transaction will pay.

If that's not enough to get included in a block, then you're not included in a block. Other blockchains, like you can think of Ethereum or other blockchains that support smart contracts. The way that those work with their transaction fees is that you actually specify the maximum fee that you would ever be willing to pay. And then a fee rate such that when the transaction executes, you don't actually know how much transaction fee you're going to be paying until the transaction executes. So, there's a lot more uncertainty there on the user's end, and the model is a little bit less clear to users, and that can be a little bit more hostile.

— GA:

Awesome. Thank you. And so we are coming up on our time here. I feel like we could do many more episodes covering Clarity and other topics because of such a breadth and depth of knowledge here. You work a lot in public, on GitHub collaborating with folks that are maybe synonymous, or other entities. Any closing comments, or thoughts, or ways that the community can get involved that you'd like to share?

— AB:

Yeah. I guess, well, the one big thing I would say is if you are excited about these sets of changes like SIP-012 in particular, go take a look at the pull request on the Stacks governance org, participate in the vote. And if you want to get more involved in Stacks blockchain stuff, hang out on our Git repo. Issues are read, we have discussion posts, stuff like that. And PR's are always welcome. Reviews could be tedious, but definitely GitHubs the place to get involved.

— GA:

Great. And just to recap, these are some of the changes that are happening over the next month, or so essentially Q4 this year. And there's a lot that we can look out for with this improvement in the Stacks improvement proposal SIP-012, as well as these network fee improvements happening as well. And then I think we're going to have to touch base about there's a lot of proposals for introducing additional scalability efforts to the Stacks blockchain. We can dive into that in a future episode, but thank you so much for joining Aaron.

— AB:

Yeah, of course. Thank you, Gina.

— GA:

Thanks everyone for tuning in!